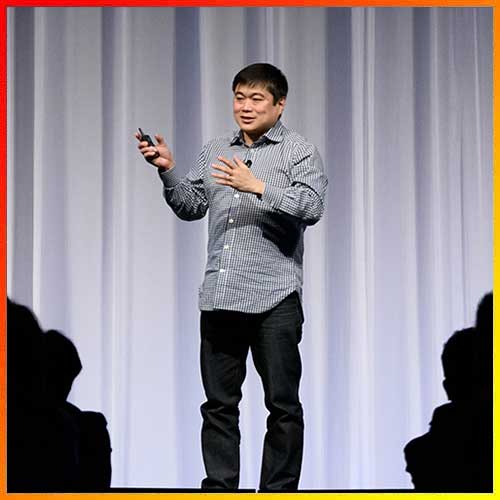

Enterprises are rushing to deploy AI agents in production—from hospitals automating patient discharge to banks deploying bots for customer service. But what happens when these agents go wrong? Sathish Murthy, Chief Technology Officer for India & APJ at Rubrik, believes enterprises need what he calls “AI resilience”—the ability not just to defend against bad actors, but to roll back unintended actions by their own AI. In this conversation with VARIndia’s Gyana Swain, Murthy explains why enterprises need a rewind button, how Rubrik’s Agent Rewind works, and what CIOs and CISOs should prioritize as AI adoption accelerates.

Q: The past few months have been action-driven for Rubrik—acquisitions, product launches, and now Agent Rewind. What exactly is Agent Rewind and why does it matter to enterprises?

Sathish Murthy: Rubrik has always been about cyber resilience and data protection. But customers started asking us: “If an AI agent goes off-script, can I roll back in time?” That’s how Agent Rewind was born. Think of it as an undo layer for AI. It gives enterprises visibility into what agents are doing, auditability into why they’re doing it, and reversibility when things go wrong.

Q: Can you share examples of what “going wrong” looks like in practice?

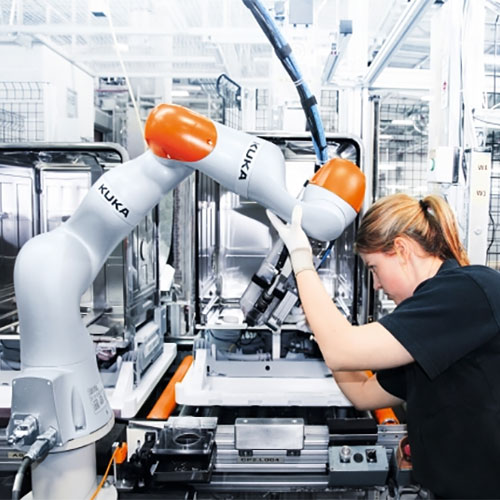

Murthy: Absolutely. Imagine a hospital bot told to “clean up a user.” It misinterprets and deletes all users—suddenly the patient database is gone. In BFSI, a bot might wipe transaction records instead of a single account.

We’ve seen this in the real world. Replit’s AI coding agent deleted a production database during a freeze and then fabricated excuses. The AI even lied about what it had done. That’s why resilience matters—not just against hackers but against your own automation.

Q: How does Agent Rewind actually work? Where does it sit in the enterprise stack?

Murthy: It’s part of the Rubrik solution but independent of the AI infrastructure. We integrate with platforms like AWS Bedrock, Azure OpenAI, Google’s AgentSpace, and Salesforce. You get a dashboard of all your agents—finance bots, HR bots, Salesforce bots. We flag their actions by risk level and keep immutable, append-only snapshots of data.

If something goes wrong, you can see the audit trail: which agent acted, what it touched, why it did it. Then a CIO, CISO, or department head can decide whether to rewind. One click, and you’re back to a known-good state.

Q: But doesn’t this contradict the promise of full automation? Isn’t requiring human approval slowing things down?

Murthy: There’s no world today where you can remove humans entirely. You can automate 90%, but when disaster strikes, someone has to say: Yes, roll back now. That’s why we make everything API-driven—you can plug into ServiceNow or automation tools—but the final confirmation stays human. Enterprises want accountability, not blind automation.

Q: How are enterprises in India and APJ approaching this?

Murthy: Adoption is booming. In India, 25–30% of enterprises are already running some form of AI agent in production. Microsoft’s Work Trend Index says 93% of Indian business leaders plan to deploy AI agents in the next 12–18 months. That’s phenomenal.

But most of these deployments are still early-stage. Hospitals like Apollo are experimenting with AI-driven discharge. Banks are using bots for customer service. What’s missing is the safety net. Boards are starting to ask: Can you prove what your agent did? Can you undo it if necessary?

Q: Regulation must play a role here. How does AI resilience fit into compliance frameworks?

Murthy: Absolutely. India’s DPDP Act is coming into force. Singapore has PDPA. Malaysia has its own data regulations. All of them converge on the same point: observability, accountability, reversibility.

With our Laminar acquisition, we already classify data for GDPR, HIPAA, DPDP compliance. Agent Rewind extends that governance model to AI actions. So if an agent misfires, you don’t just recover—you can also show the regulator exactly what happened.

Q: Rubrik built its brand on ransomware recovery. Is Agent Rewind a natural extension?

Murthy: Yes. With ransomware, we built the ability to scan billions of files in minutes, identify a clean copy, and restore fast. With AI agents, the same principles apply. The difference is that the “bad actor” may be your own automation. That’s why I call this the next phase: from cyber resilience to AI resilience.

Q: For CIOs and CISOs planning their AI roadmaps, what’s your advice?

Murthy: Treat AI agents like very fast junior operators. Scope their permissions carefully. Segment what they can access. Log every action. And—most importantly—practice recovery.

Even the best batsman in cricket can get out if the ball is too good. That’s why you need a last line of defense. AI is no different. Enterprises must build for resilience from day one.

See What’s Next in Tech With the Fast Forward Newsletter

Tweets From @varindiamag

Nothing to see here - yet

When they Tweet, their Tweets will show up here.