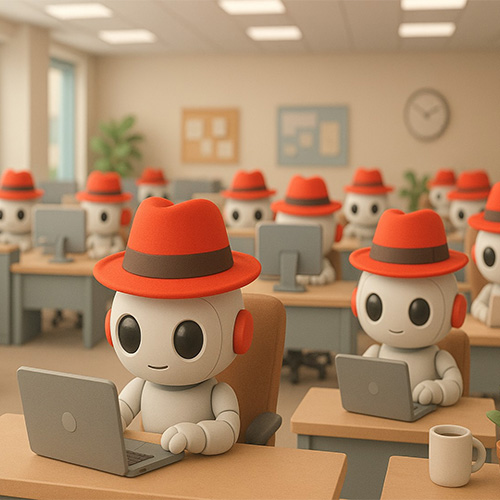

Red Hat has announced Red Hat AI 3, a significant evolution of its enterprise AI platform. Bringing together the latest innovations of Red Hat AI Inference Server, Red Hat Enterprise Linux AI (RHEL AI) and Red Hat OpenShift AI, the platform helps simplify the complexities of high-performance AI inference at scale, enabling organizations to more readily move workloads from proofs-of-concept to production and improve collaboration around AI-enabled applications.

Red Hat AI 3 focuses on directly addressing these challenges by providing a more consistent, unified experience for CIOs and IT leaders to maximize their investments accelerated computing technologies. It makes it possible to rapidly scale and distribute AI workloads across hybrid, multi-vendor environments while simultaneously improving cross-team collaboration on next-generation AI workloads like agents, all on the same common platform. With a foundation built on open standards, Red Hat AI 3 meets organizations where they are on their AI journey, supporting any model on any hardware accelerator, from datacenters to public cloud and sovereign AI environments to the farthest edge.

As organizations move AI initiatives into production, the emphasis shifts from training and tuning models to inference, the “doing” phase of enterprise AI. Red Hat AI 3 emphasizes scalable and cost-effective inference, by building on the wildly-successful vLLM and llm-d community projects and Red Hat’s model optimization capabilities to deliver production-grade serving of large language models (LLMs).

To help CIOs get the most out of their high-value hardware acceleration, Red Hat OpenShift AI 3.0 introduces the general availability of llm-d, which reimagines how LLMs run natively on Kubernetes. llm-d enables intelligent distributed inference, tapping the proven value of Kubernetes orchestration and the performance of vLLM, combined with key open source technologies like Kubernetes Gateway API Inference Extension, the NVIDIA Dynamo low latency data transfer library (NIXL), and the DeepEP Mixture of Experts (MoE) communication library, allowing organizations to -

· Lower costs and improve response times with intelligent inference-aware model scheduling and disaggregated serving

· Deliver operational simplicity and maximum reliability with prescriptive "Well-lit Paths" that streamline the deployment of models at scale on Kubernetes.

· Maximize flexibility with cross-platform support to deploy LLM inference across different hardware accelerators, including NVIDIA and AMD.

llm-d builds on vLLM, evolving it from a single-node, high-performance inference engine to a distributed, consistent and scalable serving system, tightly integrated with Kubernetes, and designed for enabling predictable performance, measurable ROI and effective infrastructure planning. All enhancements directly address the challenges of handling highly variable LLM workloads and serving massive models like Mixture-of-Experts (MoE) models.

A unified platform for collaborative AI

Red Hat AI 3 delivers a unified, flexible experience tailored to the collaborative demands of building production-ready generative AI solutions. It is designed to deliver tangible value by fostering collaboration and unifying workflows across teams through a single platform for both platform engineers and AI engineers to execute on their AI strategy. New capabilities focused on providing the productivity and efficiency needed to scale from proof-of-concept to production include:

· Model as a Service (MaaS) capabilities build on distributed inference and enable IT teams to act as their own MaaS providers, serving common models centrally and delivering on-demand access for both AI developers and AI applications. This allows for better cost management and supports use cases that cannot run on public AI services due to privacy or data concerns.

· AI hub empowers platform engineers to explore, deploy and manage foundational AI assets. It provides a central hub with a curated catalog of models, including validated and optimized gen AI models, a registry to manage the lifecycle of models and a deployment environment to configure and monitor all AI assets running on OpenShift AI.

· Gen AI studio provides a hands-on environment for AI engineers to interact with models and rapidly prototype new gen AI applications. With the AI assets endpoint feature, engineers can easily discover and consume available models and MCP servers, which are designed to streamline how models interact with external tools. The built-in playground provides an interactive, stateless environment to experiment with models, test prompts and tune parameters for use cases like chat and retrieval-augmented generation (RAG).

· New Red Hat validated and optimized models are included to simplify development. The curated selection includes popular open source models like OpenAI’s gpt-oss, DeepSeek-R1, and specialized models such as Whisper for speech-to-text and Voxtral Mini for voice-enabled agents.

Joe Fernandes, vice president and general manager, AI Business Unit, Red Hat said, "As enterprises scale AI from experimentation to production, they face a new wave of complexity, cost and control challenges. With Red Hat AI 3, we are providing an enterprise-grade, open source platform that minimizes these hurdles. By bringing new capabilities like distributed inference with llm-d and a foundation for agentic AI, we are enabling IT teams to more confidently operationalize next-generation AI, on their own terms, across any infrastructure."

See What’s Next in Tech With the Fast Forward Newsletter

Tweets From @varindiamag

Nothing to see here - yet

When they Tweet, their Tweets will show up here.