AI Advances Biosafety and Risk Mitigation

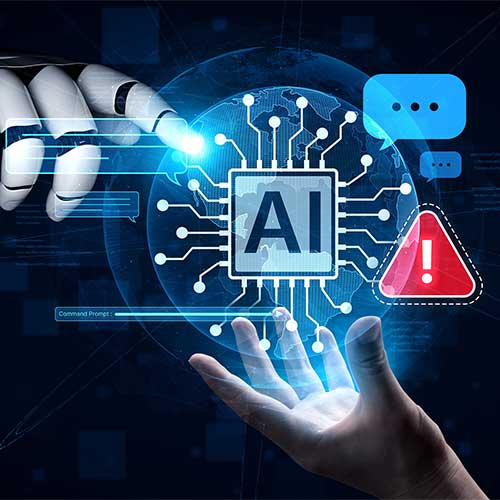

Leading artificial intelligence developers, including Anthropic, are rolling out stronger safety protections after concluding they cannot fully exclude the possibility that advanced models might assist inexperienced users in pursuing biological or chemical harm.

Over the past year, AI systems described as “co-scientists” have rapidly improved.

They can summarize complex research, recommend experimental pathways, and even guide steps involved in molecule or protein design.

Such capabilities hold enormous promise for medicine and public health, potentially accelerating drug discovery, outbreak response, and diagnostic accuracy.

Yet researchers warn the same fluency could be misused.

Early studies indicate that AI guidance might go beyond what a person could achieve through ordinary web searches, lowering technical barriers.

Scientists caution, that the evidence remains preliminary and requires deeper validation.

This dual-use reality leaves policymakers facing an uncomfortable trade-off.

Restricting access too tightly could slow life-saving innovation, while broad availability might widen the risk landscape.

Developers are therefore experimenting with layered safeguards: tighter content filters, expert monitoring, staged access to sensitive knowledge, and partnerships with biosecurity specialists.

The debate is shifting from whether AI can aid science to how society governs its power—seeking a path that protects the public without choking off breakthroughs that could transform human health.

See What’s Next in Tech With the Fast Forward Newsletter

Tweets From @varindiamag

Nothing to see here - yet

When they Tweet, their Tweets will show up here.