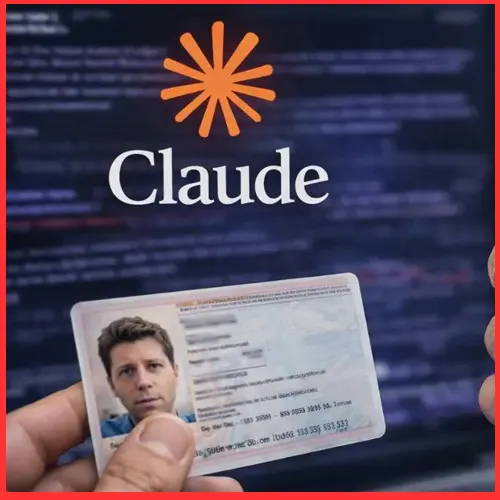

As per a recent company blog post, Anthropic has introduced identity verification for select users to access its services.

"We are rolling out identity verification for a few use cases, and you might see a verification prompt when accessing certain capabilities, as part of our routine platform integrity checks, or other safety and compliance measures," the company said.

To meet these requirements, users need to submit a photo holding a valid government-issued ID, along with a live selfie captured via phone or webcam, with the verification process taking up to five minutes to complete.

The move does not align with the company’s privacy-focused positioning, particularly its zero data retention policy, under which it claims not to store user data or generated responses. This contrasts with peers such as ChatGPT, which leverage user data to train their models.

Many ChatGPT users have shifted to Anthropic due to their concerns over the company's deal with the US's Pentagon Defence Department to use for its classified networks. Following its refusal to the deal with the US government, Anthropic has witnessed a 60% surge in its free subscriptions in February.

However, the new verification requirement has drawn criticism on social media, with some users pointing out that it is not mandated by any government directive but introduced voluntarily by the company. Several commenters suggested the move could inadvertently benefit competitors.

For the verification process, Anthropic has partnered with Persona Identities, a third-party provider that requires users to submit a valid, undamaged government document such as a passport, driver’s license, state or provincial ID, or national identity card. The company has stated that the verification data will remain confined to the user, Anthropic, and Persona Identities, and will not be used beyond the scope of identity verification.

"Our verification data is never shared with third parties for marketing, advertising, or any purpose unrelated to verification and compliance," the blog post said.

A spokesperson told a publication that this would apply to a "small number" of cases where fraudulent or abusive behaviour is indicated by the user's activity.

This arrangement also raises questions regarding the integrity of the user's verification data after recent cases of third part service providers being hacked, such as with Tata Consultancy Services in April 2025. A more pertinent example was regarding Discord, where an October 2025 hack exposed 70,000 users' personal data, which was submitted for verification.

See What’s Next in Tech With the Fast Forward Newsletter

Tweets From @varindiamag

Nothing to see here - yet

When they Tweet, their Tweets will show up here.